#56 | Why AI still can’t deliver coffee or snacks

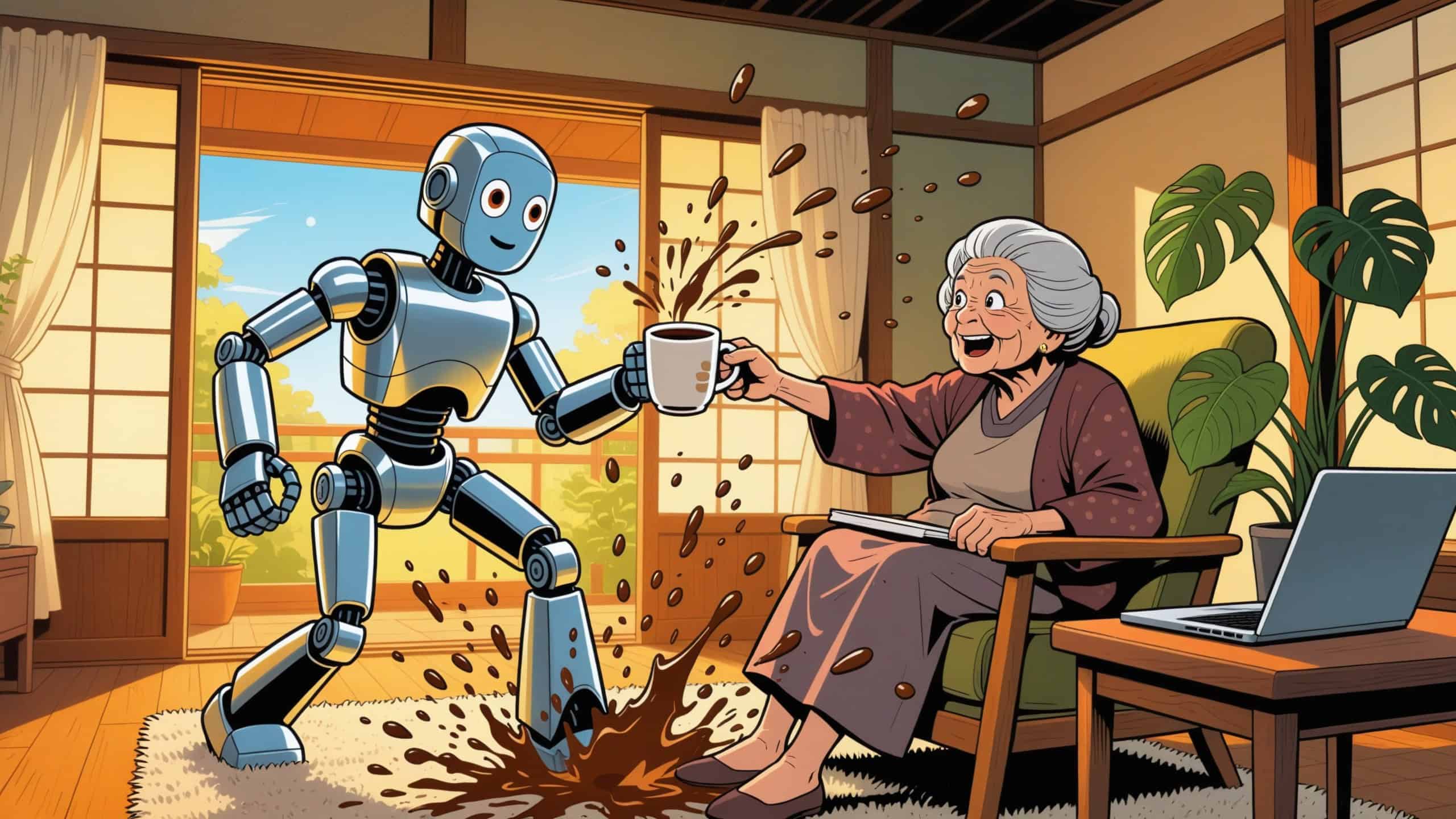

TL;DR: Spatial AI promises robots that understand the physical world. What spatial intelligence actually is, why billions are being bet on it, and why expensive robots may break when they meet reality’s houseplants.

👋 Hello,

My friend Kenji bought a $15,000 robot because walking ten feet to his kitchen felt like cardio.

Alright, the robot part’s made up—but every story has to start somewhere.

So, Kenji is a guy who once asked if I could “just grab” his phone from the coffee table while he was sitting closer to it than I was. The robot, he explained, would handle coffee delivery. Also juice. Maybe snacks.

“It has spatial intelligence,” he said, like that meant something.

Last Tuesday (for the sake of the story), I watched it try. 🙂

When expensive technology meets cheap furniture

Kenji’s grandmother—Yuki-san, seventy-six, seventeen houseplants, zero patience for nonsense—was reading in her usual chair when she asked for coffee.

Kenji, sitting next to the coffee machine, activated the robot.

The thing was beautiful. Sleek aluminum, servo motors humming their expensive German engineering hum, carrying a ceramic mug that cost less than its gripper mechanism.

It rolled toward Yuki-san with what the marketing materials called “human-like environmental awareness.”

The coffee trembled, but not from mechanical failure. The robot was experiencing what engineers politely call “statistical uncertainty,” which is a fancy way of saying it had no idea what to do with Yuki-san’s massive philodendron draped over her reading chair.

The AI’s internal world model showed empty space where chlorophyll definitely existed. Gorgeous plant, but zero presence in training data. The robot paused, recalculated, and apparently decided the plant represented hostile architecture.

When Yuki-san reached for her coffee—helpful, really, trying to make the robot’s job easier—the AI interpreted this gesture as a threat to its navigational certainty and yanked the mug away.

Hot liquid arced through the air in perfect parabolic beauty. Physics is working exactly as advertised. Outcome working exactly as feared.

The coffee hit Yuki-san’s lap, the carpet, and somehow Kenji’s laptop on the side table. The robot, having successfully defended itself from the terrifying grandmother-plant alliance, reported task completion with 94.7% confidence.

But Yuki-san’s yelp of pain wasn’t in the training data either.

Kenji walked to the kitchen and made her a new cup. Took him forty-five seconds.

Welcome to spatial intelligence: AI’s next frontier, and its current comedy of errors.

The AI Learning Guy newsletter 🤖 🧠💡

AI learning hacks and mega prompts delivered to your inbox.

That dumb smart AI still can’t find the living room

Fei-Fei Li—Stanford professor, AI pioneer, person whose papers people actually read—calls today’s language models in her article Spatial Intelligence is AI’s Next Frontier“wordsmiths in the dark.”

Yes, ChatGPT can write sonnets, debug code, and explain quantum mechanics using pizza analogies. It’s brilliant at abstraction but completely blind to space.

Ask it to describe a room and you’ll get poetry. Ask it whether your couch fits through the door? It’ll calculate dimensions beautifully and still understand nothing.

It can’t picture the doorframe, the angle, the stubborn corner that catches on everything because reality doesn’t care about measurements.

This isn’t a quirk. It’s architectural.

LLMs learned from text—one-dimensional sequences of tokens. But the physical world operates in three dimensions with geometry, physics, and objects that persist when you stop looking at them. (Babies master this around six months. AI is still figuring it out.)

Li argues spatial intelligence isn’t an add-on to AI. It’s foundational. Life on Earth evolved through the “perception-action loop”—sensing the environment, responding to it, surviving because you understood where things were and how they moved.

Your brain does this constantly. You catch a ball by predicting its trajectory. You navigate your dark apartment without destroying your shins. You pour coffee without calculating liquid dynamics.

Current AI can’t do any of this reliably.

State-of-the-art multimodal models perform barely better than random chance at estimating distance, orientation, and size.

They can’t mentally rotate objects or regenerate them from new angles.

They can’t navigate mazes, recognize shortcuts, or predict basic physics.

AI-generated videos lose coherence after a few seconds because the systems lack understanding of what should happen next in three-dimensional space.

However, apparently, this is precisely the gap companies are betting billions to close. It’s getting wild, so keep reading.

The Billion-Dollar bet on understanding space

Just some data for those interested:

- World Labs raised $230 million at a billion-dollar valuation.

- Google DeepMind formed a dedicated world models team.

- NVIDIA launched Cosmos—a “physical AI platform” trained on 9,000 trillion tokens from 20 million hours of real-world data.

The technology they’re building—world models—differs from language models in three ways:

They’re generative and spatially consistent.

They don’t just describe worlds; they spawn simulated environments following geometric and physical rules. Objects maintain their relationships with one another.

They’re multimodal by design.

They process images, videos, depth maps, text, gestures, actions—then generate complete world states. Tell it “move the chair” or show it a hand gesture, and it updates the entire 3D environment.

They predict interactive states.

Given actions or goals, they predict what happens next, consistent with previous states, physical laws, and dynamic behaviors. Push a cup near the table edge; the model knows it will fall.

This is exponentially harder than training language models.

Language is one-dimensional sequential data. Worlds are multi-dimensional with geometric, physical, semantic, and dynamic constraints operating simultaneously.

But if it works—genuinely works—the applications are transformative. Reservations for the Enterprise holodeck? Already booked, thank you.

What perfect spatial intelligence would actually unlock

Near-term (already happening)

Creative tools. Game developers are generating 3D environments without traditional 3D software. Filmmakers are creating virtual sets. Architects walking through unbuilt structures.

World Labs just launched Marble. It generates persistent 3D environments from text, images, or panoramas. You can edit them, export them, and view them in VR. The demos show stunning Victorian mansions and photorealistic forests.

Medium-term (3-7 years)

Robotics gets interesting. Training data scarcity could be solved through synthetic simulation data, bridging the gap between controlled environments and messy reality.

More importantly, spatial intelligence could solve the perception problem. Because current vision-language models struggle with nuanced spatial relationships.

In other words, a robot needs to understand “place the mug near the edge but not too close” and “navigate around the plant without treating it as hostile.”

Longer-term (7+ years)

Scientific discovery, healthcare, and education. Simulating experiments in parallel. Modeling molecular interactions. Training surgical procedures in a realistic simulation.

Furthermore, creating immersive learning environments where students can explore cellular machinery or walk through historical events.

That’s the vision. That’s what the billions are funding. And, that’s also just a promise with a built-in party pooper.

When perfect simulations meet imperfect grandmothers

Remember Kenji’s robot? Read too many books, data, and scripts but never travelled to the real world.

Thousands of simulated living rooms, rendered by AI. Perfect lighting. Furniture arranged with geometric precision. Every scenario ends with a successful coffee delivery and 94.7% confidence. Boring!

But Yuki-san’s living room wasn’t simulated.

The philodendron draped over her chair existed in physical space, not the training data. The way afternoon light shifted across the carpet at that exact angle—never encountered. Yuki-san reaching for the mug instead of waiting politely—completely off-script.

The robot had learned what coffee delivery looked like across ten million pristine scenarios. It hasn’t learned yet what coffee delivery is in dynamic environments.

This gap—between simulation and reality—is why spatial AI keeps failing in ways that seem absurd but make perfect sense when you think about it.

Simulations are clean. Reality is mean. Reality has chaos, edge cases, and grandmothers who improvise.

Training data shows you the world as it should behave. The actual world does whatever it wants.

Fei-Fei Li argues better world models solve this—systems that genuinely understand cause and effect, not just pattern matching. If you push a cup, the model knows it falls. If you open a door, it knows what’s behind it stays put.

Maybe. Eventually.

The AI Learning Guy newsletter 🤖 🧠💡

AI learning hacks and mega prompts delivered to your inbox.

The frontier is real (the distance is farther than you think)

I’m still excited about spatial intelligence.

Not because current systems work flawlessly—they obviously don’t.

Kenji’s fictional robot now serves as Yuki-san’s most expensive plant stand. The philodendron looks great on it.

But the problem being solved is real. AI that can’t understand space is AI stuck in abstraction. The physical world demands spatial reasoning—geometry, physics, persistence, cause and effect.

The companies building this will iterate. Models will improve. Eventually, we’ll have AI that genuinely understands what happens when you push a cup near the edge of the table.

Until then, we’re in the awkward middle. Technology exists (sort of) and works (kind of) and generates beauty that hides broken understanding.

The gap matters because billions are being invested in systems that will train surgeons, drive vehicles, and guide robots through homes.

These applications can’t run on “statistically plausible.” They need “physically accurate.”

P.S. — Kenji’s considering returning the robot and using the refund to buy a really long straw. I told him to just get up. He asked if AI could solve that problem, too.

The future is weird.

Mark

The AI Learning Guy

👋⚡😎

Interesting Sources

- Fei-Fei Li’s manifesto on spatial intelligence as AI’s next frontier

- World Labs launches Marble, their first commercial world model product

- NVIDIA Cosmos platform for physical AI development with open models

- Google DeepMind’s world models team led by former OpenAI researcher

- Medical AI hallucination risks in surgical training and clinical applications

- Simulation-reality gap challenges in autonomous vehicles and robotics

- AI hallucinations getting worse in newer reasoning models

- Bridging the sim-to-real gap in robotics development

Note: No single website has all the answers. This list serves as a starting point for those who want to explore or satisfy their curiosity about AI.

Links: Links with * are affiliate links. See disclosure below.